Understanding the Differences Between Thermistors and RTD Sensors

It is common for individuals to get overwhelmed by the task of choosing temperature sensors for specific applications. Engineers have different options, so individuals unfamiliar with calibrations can feel lost. Learning about the details of the two most popular sensors can help you understand the pros and cons of each. What are the main differences between thermistors and RTD sensors?

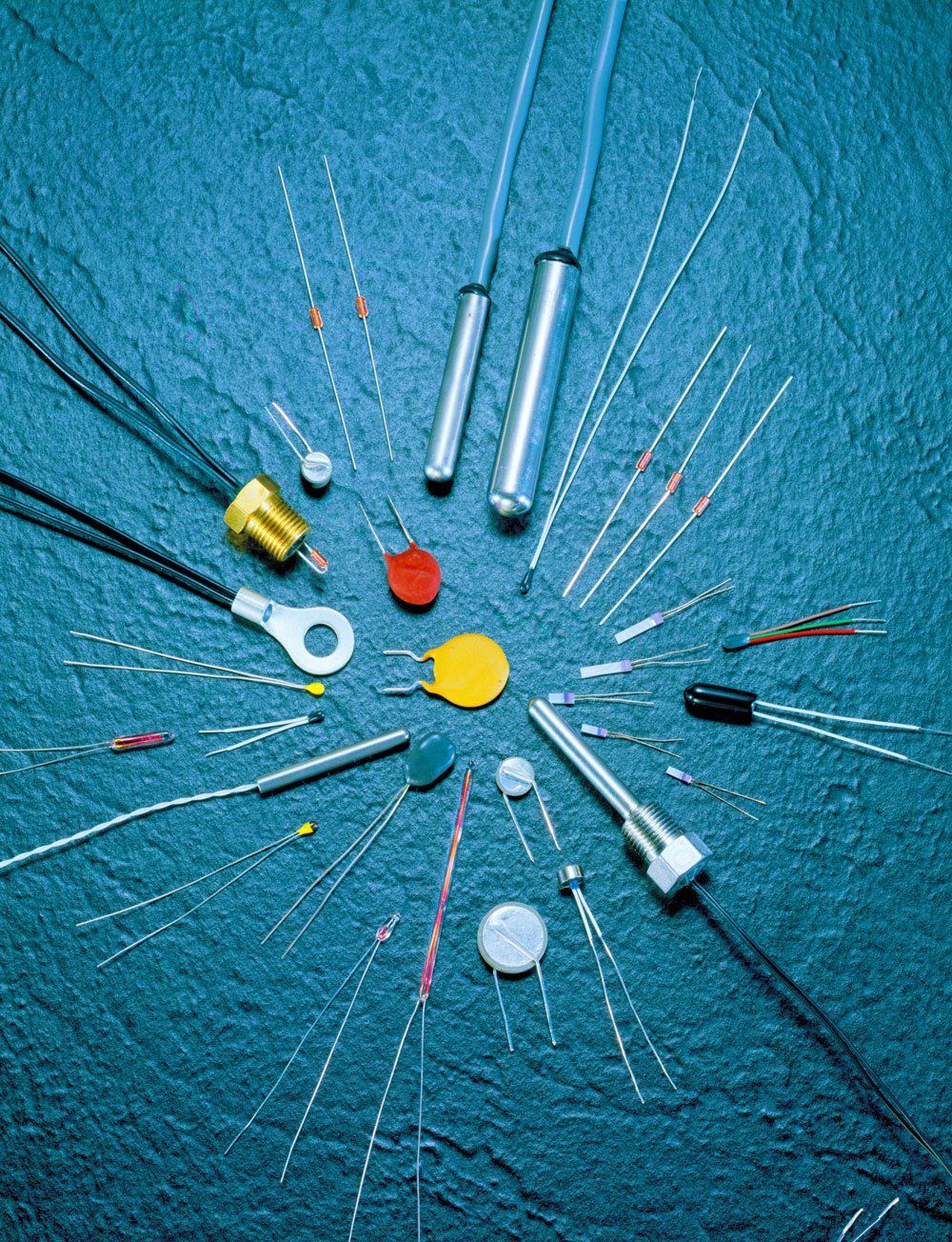

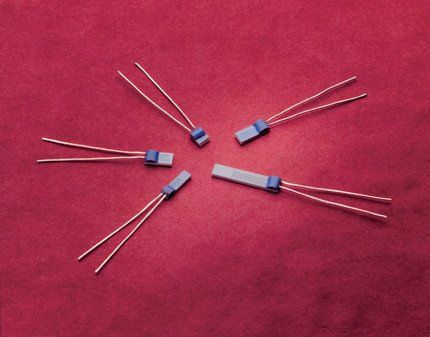

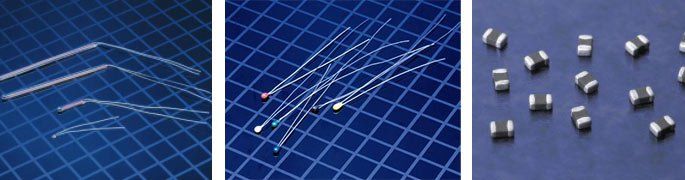

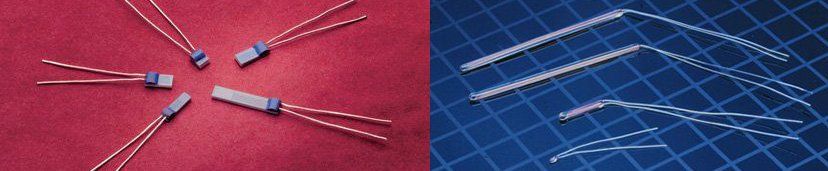

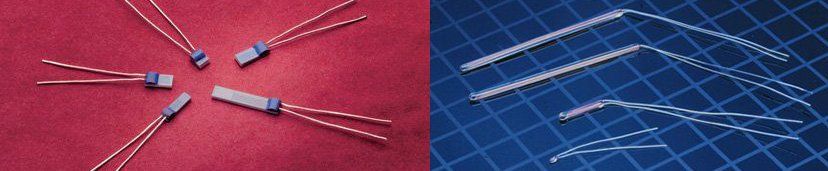

An RTD, or resistance temperature detector, is a sensor that is designed to measure temperature that is based on resistance changes inside of a metal resistor. Of all RTDs on the market, the PT100 sensor is the most popular. It uses platinum, which allows the sensor to have a resistance of 100 ohms at close to 0°C. These sensors are typically used to measure temperature in both industrial and laboratory processes. They are widely known as accurate, stable, and offer high repeatability. In fact, the PT100 is considered one of the most accurate sensors available.

PT100 RTD

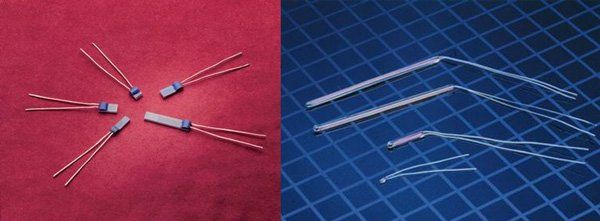

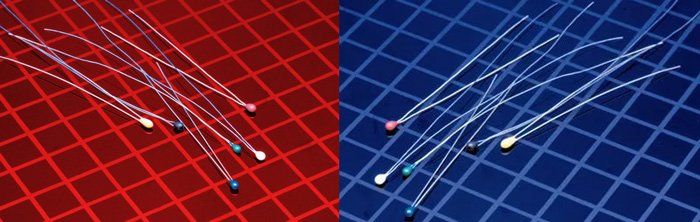

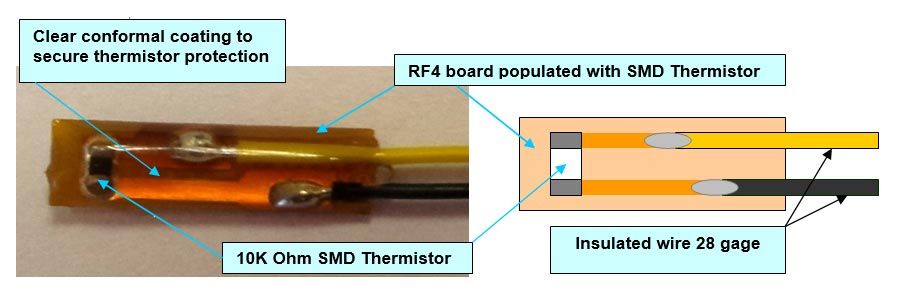

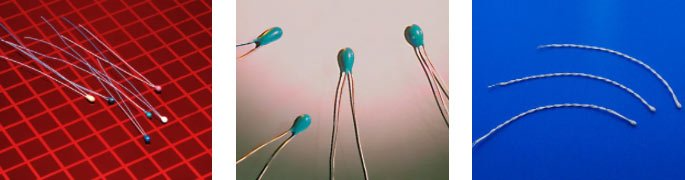

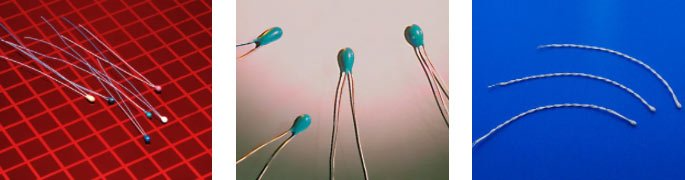

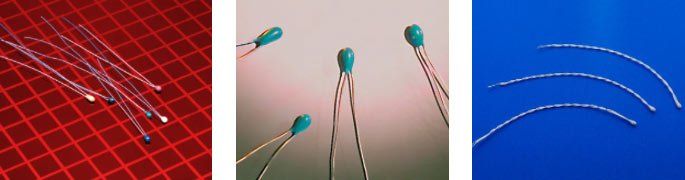

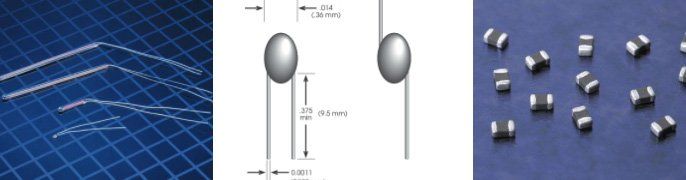

Thermistors share similarities with RTDs, but they are different enough where the two should not be used interchangeably. A thermistor sensor is constructed from sintered, semiconductor materials that are known to exhibit a significant change in resistance that is directly proportional to minimal changes in temperature. Unlike RTDs, a thermistor has non-linear changes in resistance. The devices reduce their resistance when the temperature increases. The main reasons to choose a thermistor over an RTD are:

- They have a greater resolution because of higher resistance change per the degree of temperature

- They offer users excellent interchangeability

- They are small, which means they provide fast responses to changes in temperature

- Thermistor Resistance and Bias Current

When choosing a bias current and thermistor, it is crucial to decide on one where voltage developed in the middle of the range. Remember, controller feedback inputs should be in voltage (derived from thermistor resistance).

- Practical Precautions for RTDs

- Basics of Thermistors and RTD Wiring

General

Technical

Comparisons

Applications

RTD

Assemblies

LET'S WORK TOGETHER!

6 Kings Bridge RoadFairfield, NJ 07004, USA

Sensor Scientific, Inc | All Rights Reserved